Section I – Sound Editing

Sound in the cinema does not necessarily match the image, nor does it have to be continuous. The sound bridge is used to ease the transition between shots in the continuity style. Sound can also be used to reintroduce events from earlier in the diegesis. Especially since the introduction of magnetic tape recording after WWII, the possibilities of sound manipulation and layering have increased tremendously. Directors such as Robert Altman are famous for their complex use of the soundtrack, layering multiple voices and sound effects in a sort of “sonic deep focus.” In this clip from Nashville (1975), we simultaneously hear a conversation between an English reporter and her guide, a gospel choir singing, and the sound engineers’ chatter.

SOUND BRIDGE

Sound bridges can lead in or out of a scene. They can occur at the beginning of one scene when the sound from the previous scene carries over briefly before the sound from the new scene begins. Alternatively, they can occur at the end of a scene, when the sound from the next scene is heard before the image appears on the screen. Sound bridges are one of the most common transitions in the continuity editing style, one that stresses the connection between both scenes since their mood (suggested by the music) is still the same. But sound bridges can also be used quite creatively, as in this clip from Yi Yi (Taiwan, 2000). Director Edward Yang uses a sound bridge both to play with our expectations. The clip begins with a high angle shot of a couple arguing under a highway. A piano starts playing and the scene cuts into a house interior, where a pregnant woman is looking at some cd’s…

…finally, the camera pans to reveal a young girl (previously offscreen) playing the piano. It is only then that we realize the music is diegetic, and that the young girl was looking at the window at her best friend and her boyfriend. The romantic melody she plays as she realizes they are breaking up in turn introduces a now possible future relationship for her — which eventually happens, as she starts dating her best friend’s ex-boyfriend later in the film.

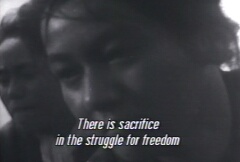

SONIC FLASHBACK

Sound from one diegetic time is heard over images from a later time. In this example from Kurosawa’s No Regrets for Our Youth (Waga seishun ni kui nashi, Japan, 1946), the heroine Yukie hears the voices of her dead father and executed husband, voicing the aspirations that sustain her continuing struggle.

Sonic flashback often carries this kind of moral or emotional overtone, making a character’s motivation explicit.

Section 2 – Source

Most basically, this category refers to the place of a sound in relation to the frame and to the world of the film. A sound can be onscreen or offscreen, diegetic or nondiegetic (including voice over), it can be recorded separately from the image or at the moment of filming. Sound source depends on numerous technical, economic, and aesthetic considerations, each of which can affect the final significance of a film.

DIEGETIC/NON-DIEGETIC SOUND

Any voice, musical passage, or sound effect presented as originating froma source within the film’s world is diegetic. If it originates outside the film (as most background music) then it is non-diegetic.

A further distinction can be made between external and internal diegetic sound. In the first clip from Almodóvar’s Women On The Verge Of A Nervous Breakdown (Mujeres al Borde de un Ataque de Nervios, 1988) we hear Iván speaking into the microphone as he works on the Spanish dubbing of Johnny Guitar (Nicholas Ray, 1954). Since he is speaking out loud and any other character could hear him, this is an example of external diegetic sound. This clip has no non-diegetic sounds other than the brief keyboard chord that introduces the scene.

Sound and diegesis gets more complicated in the next clip, from Dario Argento’s The Stendhal Syndrome (La Sindrome di Stendhal, Italy, 1996). As Anna looks at Paolo Uccello’s famous painting of the Battle of San Romano(c1435), we begin to hear the sounds of the battle: horses whimpering, weapons clashing, etc. These sounds exist only in Anna’s troubled mind, which is highly sensitive to works of art. These are internal diegetic sounds (inside of a character’s mind) that no one else in the gallery can hear.

On the other hand, the Ennio Morricone eerie score that sets up the scene and mixes with the battle sounds, is a common example of non-diegetic sound, sounds that only the spectators can hear. (Obviously, no boom-box blasting tourist is allowed into the Uffizi’s gallery!)

DIRECT SOUND

When using direct sound, the music, noise, and speech of the profilmic event at the moment of filming is recorded in the film. This is the opposite of postsynchronization in which the sound is dubbed on top of an existing, silent image. Studio systems use multiple microphones to record directly and with the utmost clarity. On the other hand, some national cinemas, notably Italy, India and Japan, have avoided direct sound at some stage in their histories and dubbed the dialogues to the film after the shooting. But direct sound can also mean something other than the clearly defined synchronized sound of Hollywood films — the Cinéma verité, third world filmmaking and other documentarist, improvisatory and realist styles that also record sound directly but with an elementary microphone set-up, as in Abbas Kiarostami’s Taste of Cherry (Ta’m e Guilass, Iran, 1997).

The result maintains the immediacy of direct sound at the expense of clarity. Furthermore, incidental sounds (street noise, etc) are not mixed down, but left “as it is”. Impression and mood are favored over precision: not every word can be made out. The final sonic picture is blurred and harder to understand, but arguably closer to what we perceive in real life.

NONSIMULTANEOUS SOUND

Diegetic sound that comes from a source in time either earlier or later than the images it accompanies. In this clip from Almodóvar’s Women On The Verge Of A Nervous Breakdown (Mujeres al Borde de un Ataque de Nervios, Spain, 1988) Pepa adds the female voice to the dubbing of Johnny Guitar, the male voice having previously been recorded by Pepa’s ex-lover Ivan. (You can see Ivan’s dubbing here)

While Pepa’s voice is diagetic and simultaneous, Ivan’s voice is also diegetic, and yet it is nonsimultaneous, since it comes from a previous moment in the film. Almodóvar uses nonsimultaneous sound to establish a conversation that should have taken place but never did (Ivan is not returning Pepa’s calls and she is becoming desperate) when, with a perverse melodramatic twist, he has the jilted lovers repeating the words of another couple of cinematic jilted lovers. As in this example, nonsimultaneous sound is often used to suggest recurrent obsessions and other hallucinatory states.

OFFSCREEN SOUND

Simultaneous sound from a source assumed to be in the space of the scene but outside what is visible onscreen. In Life on Earth (La Vie sur Terre, Abderrahmane Sissako, 1998) a telephone operator tries to help a woman getting a call trough. While he tries to establish a connection, the camera examines the office and the other people present in the scene. Yet, even if the operator and the woman are now offscreen, their centrality to the scene is alway tangible through sounds (dialing, talking, etc).

Of course, a film may use offscreen sound to play with our assumptions. In this clip from Women On The Verge Of A Nervous Breakdown (Mujeres al Borde de un Ataque de Nervios, Pedro Almodóvar, 1988), we hear a woman and a man’s voices in conversation, in what it looks like a film production studio. Even if we do not see the speakers, we instantly believe they must be around. Gradually, the camera shows us that we are in a dubbing studio, and only the woman is present, the man’s voice being previously recorded. Moreover, theirs is not a real conversation but lines from a movie dialogue.

POSTSYNCHRONIZATION DUBBING

The process of adding sound to images after they have been shot and assembled. This can include dubbing of voices, as well as inserting diegetic music or sound effects. It is the opposite of direct sound. It is not, however, the opposite of synchronous sound, since sound and image are also matched here, even if at a later stage in the editing process. Compare the French dubbed, or post-synchronized, version of Mission: Impossible 2 (John Woo, 2000), with the sychronized original.

You can hear the original English version here.

SOUND PERSPECTIVE

The sense of a sound’s position in space, yielded by volume, timbre, pitch, and, in stereophonic reproduction systems, binaural information. Used to create a more realistic sense of space, with events happening (that is, coming from) closer or further away. Listen closely to this clip from The Magnificent Ambersons (Orson Welles, 1942) as the woman goes through her door and comes back.

As soon as she closes the door her voice sounds muffled and distant (she is walking away), then grows clearer (she is coming back), then at full volume again, as she comes out. We can also hear hushing remarks that gives us a sense of the absent presence of a whole web of family members in the house. The stronger the voice, the closer his/ her room. Sound perspective, combined with offscreen space, also gives us clues as to who (and most importantly, where) is present in a scene. Welles’ use of sound in this scene is unusual since Classical Hollywood Cinema generally sacrifices sound perspective to narrative comprehensibility.

SYNCHRONOUS SOUND

Sound that is matched temporally with the movements occuring in the images, as when dialogue corresponds to lip movements. The norm for Hollywood films is to synchronize sound and image at the moment of shooting; others national cinemas do it later (see direct sound, postsynchronization) Compare the original English version of Mission: Impossible 2 (John Woo, 2000),

with the French dubbed version.

VOICE OVER

When a voice, often that of a character in the film, is heard while we see an image of a space and time in which that character is not actually speaking. The voice over is often used to give a sense of a character’s subjectivity or to narrate an event told in flashback. It is overwhelmingly associated with genres such as film noir, and its obsessesive characters with a dark past. It also features prominently in most films dealing with autobiography, nostalgia, and literary adaptation. In the title sequence from The Ice Storm (1997) Ang Lee uses voice over to situate the plot in time and to introduce the subject matter (i.e., the American family in the 1970s), while also giving an indication of his main character’s ideas and general culture.

While a very common and useful device, voice over is an often abused technique. Over dependence on voice over to vent a character’s thoughts can be interpreted as a telling signal of a director’s lack of creativity — or a training in literature and theater, rather than visual arts. But voice over can also be used in non literal or ironic ways, as when the words a character speaks do not seem to match the actions he/she performs. Some avant garde films, for instance, make purposely disconcerting uses of voice over narration.

Section 3 – Quality

Much like quality of the image, the aural properties of a sound — its timbre, volume, reverb, sustain, etc. — have a major effect on a film’s aesthetic. A film can register the space in which a sound is produced (its sound signature) or it can be otherwise manipulated for dramatic purposes. The recording of Orson Welles’ voice at the end of Touch of Evil (1958) adds a menacing reverb to his confession.

The mediation of Abbas Kiarostami’s voice through the walkie-talkie and the video quality of the image in the coda of Taste of Cherry (Ta’m e Guilass, Iran, 1997) underscore the reflexivity that is characteristic of his films.